uqlm: Uncertainty Quantification for Language Models#

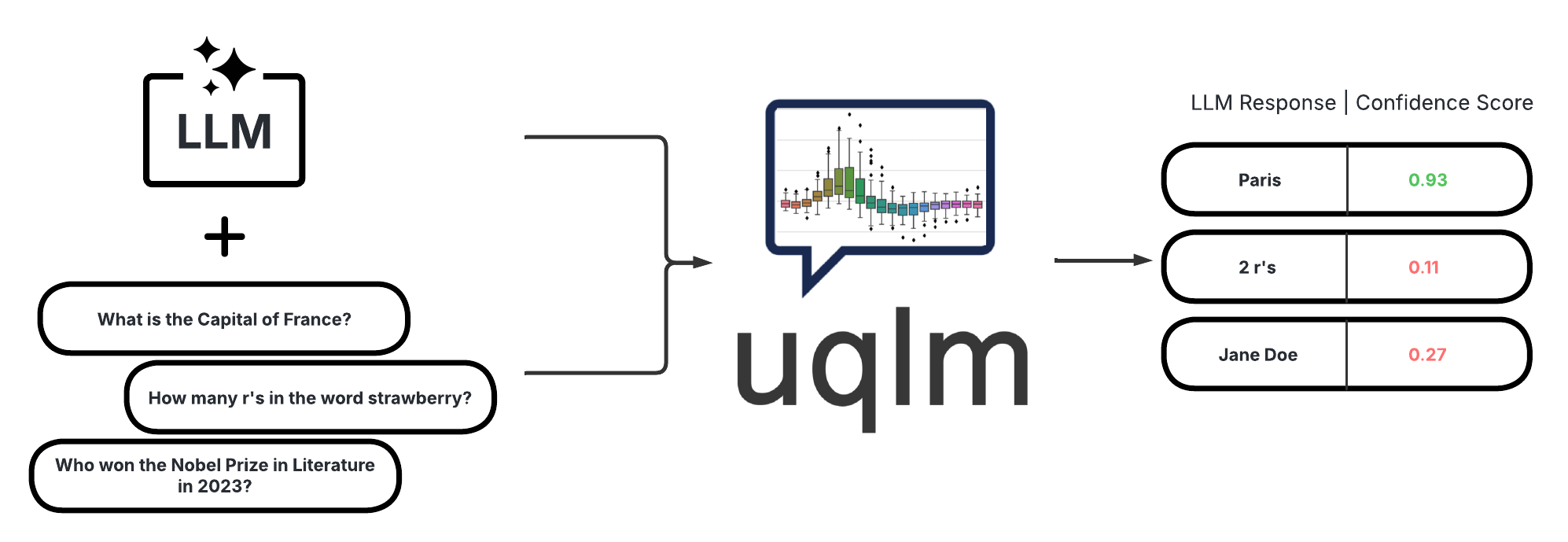

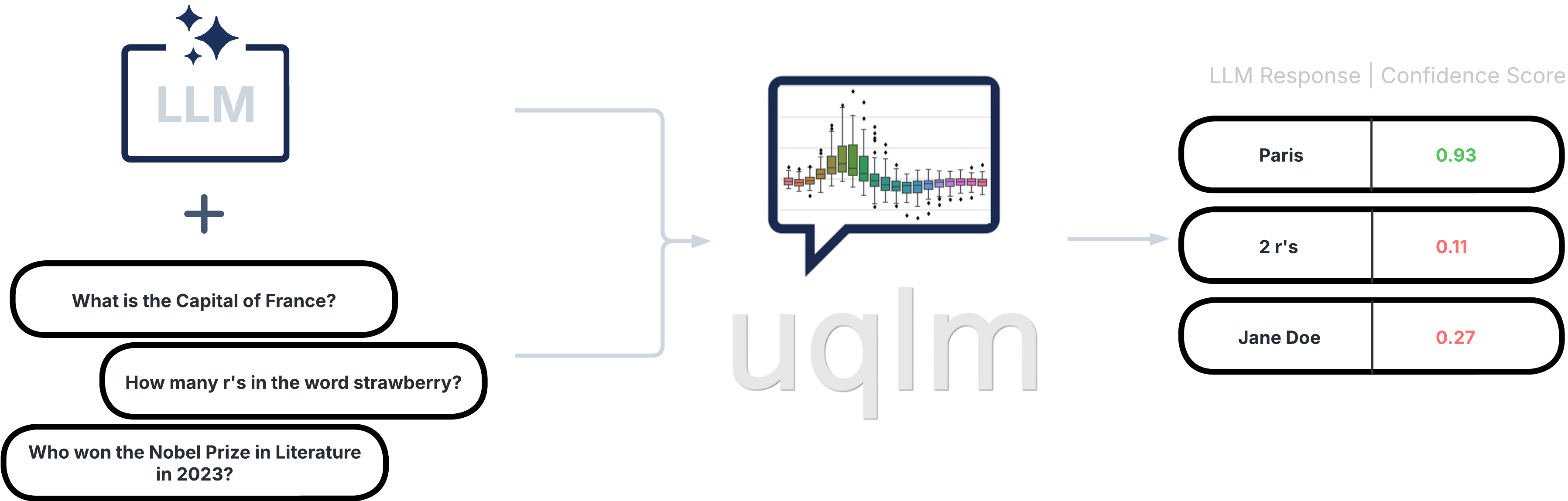

A Python library for LLM hallucination detection using state-of-the-art uncertainty quantification techniques. Each scorer returns a confidence score between 0 and 1, where higher scores indicate lower hallucination likelihood.

Scorer Types#

UQLM provides five categories of scorers. Click a card to explore the options.

Measure consistency across multiple LLM generations. Compatible with any model with no access to internals needed.

Leverage token probabilities for fast, free single-generation scoring. No extra LLM calls required.

Use one or more LLMs to evaluate response reliability. Highly customizable via prompt engineering.

Combine multiple scorers via weighted averaging for more robust confidence estimates. Tunable for advanced users.

Score uncertainty at the claim level for long-form responses, with support for uncertainty-aware response refinement.